Most enterprise AI efforts are failing for reasons that have nothing to do with models or hype. That is the argument JG Chirapurath makes in his Fortune article, and he backs it with the same numbers emerging from MIT’s Project NANDA. After reading his piece and comparing it with the MIT findings, his diagnosis is hard to argue with.

Where I extend his view is in looking beyond enterprise walls, because I believe decentralized compute can solve the same core problem in a different way.

What Chirapurath Actually Says

Chirapurath points out that enterprises invested $30 to $40 billion in generative AI pilots in 2024. According to an MIT study, 95% of those pilots produced no measurable business return. His view is that most executives are looking in the wrong direction. The failures are not in the models. They are in the data layer under the models.

He describes a pattern he saw throughout his career at Microsoft and SAP:

- Enterprises spend heavily on new AI models and applications.

- The data infrastructure feeding them has not been modernized.

- Legacy analytics systems were built for overnight batch jobs and limited datasets.

- AI requires continuous processing, full datasets, and real-time inference.

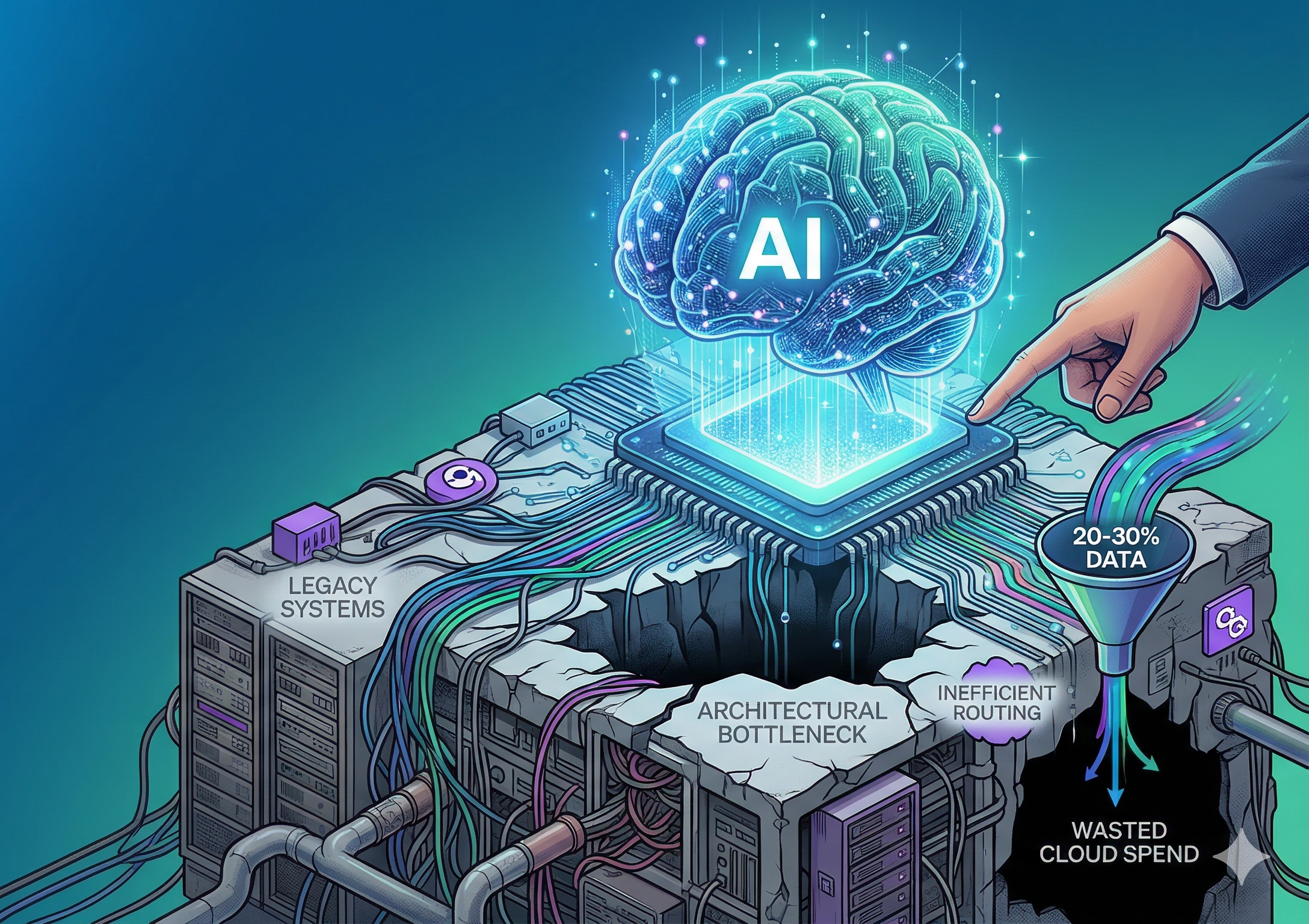

Infrastructure designed for analytics cannot keep up with this shift. The stack beneath AI cannot process enough data, fast enough, at a cost enterprises can tolerate.

The Cost Trap

About a quarter of enterprise cloud spending is wasted due to inefficient resource use, much of it tied to data processing. A company spending $100 million per year on cloud services can easily lose tens of millions without realizing it.

He also describes a recurring pattern across hundreds of deployments:

- Most enterprises process only 20% to 30% of the data they have.

- Processing all of it would increase computing costs by 5 to 10 times.

- One Fortune 100 retailer had 15 years of customer interaction data but could only afford to process 30% of it.

Their AI systems were essentially working with a limited view of their own business, so the results were limited as well. Once that cycle starts, it feeds itself: Limited data produces limited output. Leadership questions the value. Budgets shrink. Even less data gets processed. Eventually, the pilot shuts down.

The real issue is not a lack of ambition. It is an architectural bottleneck.

The Architectural Mismatch

Modern compute is no longer uniform. Infrastructure now involves CPUs, GPUs, FPGAs, and custom AI accelerators across hybrid clouds and distributed environments.

The problem is that most data frameworks still assume a single hardware profile. They do not automatically route the right job to the right processor type. Expensive accelerators sit idle while CPUs carry workloads they are not suited for. Enterprises pay premium prices for advanced hardware but still operate at outdated performance levels. Until the software layer learns to match workloads to the best available hardware, the efficiency gap will continue to slow AI progress.

Why Optimization Beats Overhaul

CIOs do not have the budget or appetite to rip out and replace infrastructure. The companies that succeed with AI do not rebuild; they optimize what they already own:

- Major E-commerce Company: Processed half a petabyte per day, tripled performance, and reduced costs by 80% without changing any code or migrating anything.

- Social Platform (350M users): Doubled its performance and cut costs in half using the exact same strategy.

When data processing is routed intelligently across CPUs, GPUs, and specialized processors, organizations can unlock major improvements without large-scale migrations.

The Strategic Inflection Point

The next decade’s leaders will not be defined by the biggest models or the flashiest applications. They will be the organizations that solve the data economics problem and are able to process complete datasets at costs they can sustain. This is the major blind spot across enterprises today and the defining opportunity for those who address it.

Where My Perspective Extends His

Everything above comes directly from Chirapurath’s article. I agree with his diagnosis: the failures come from architectural limits in the data and compute layer.

But where Chirapurath focuses on optimization within an enterprise’s existing hardware, I believe we can push this logic further.

GNUS.ai is my own system, so I know what it does and what it is built for. In my view, decentralized networks allow organizations to route workloads beyond their internal hardware and tap into a global pool of idle compute.

Instead of buying more servers or expanding cloud clusters, companies can use underused GPUs from devices around the world. That changes the economics of data processing in significant ways, especially for organizations trapped by the reality that processing full datasets would cause their bills to spike 10x.

This idea does not replace what Chirapurath recommends—it builds on it. He calls for smarter routing of workloads across heterogeneous compute. I believe that logic naturally extends to routing across decentralized compute as well. This is how organizations can finally process full datasets without budget limits becoming the choke point.

Conclusion

Chirapurath’s article provides one of the clearest explanations for why AI is failing inside enterprises, perfectly aligning with the MIT study. His focus on the data layer is correct.

Where I expand the discussion is in exploring what happens when optimization pushes beyond a single company’s infrastructure. Decentralized compute can take the architectural improvements he describes and extend them to a global level. Unlocking unused compute is the key to finally making AI sustainable at scale.

LinkedIn

LinkedIn